What are AI tools being used for in employment? Right now, the answer to that is “just about everything.” But as AI increasingly shapes hiring, employee development, succession planning, and other key employment decisions, legislation is evolving in response. In this article, Affirmity Principal Business Consultant Patrick McNiel, PhD, looks at AI law in key states, as well as some recent lawsuits that are shaping the legality of AI use.

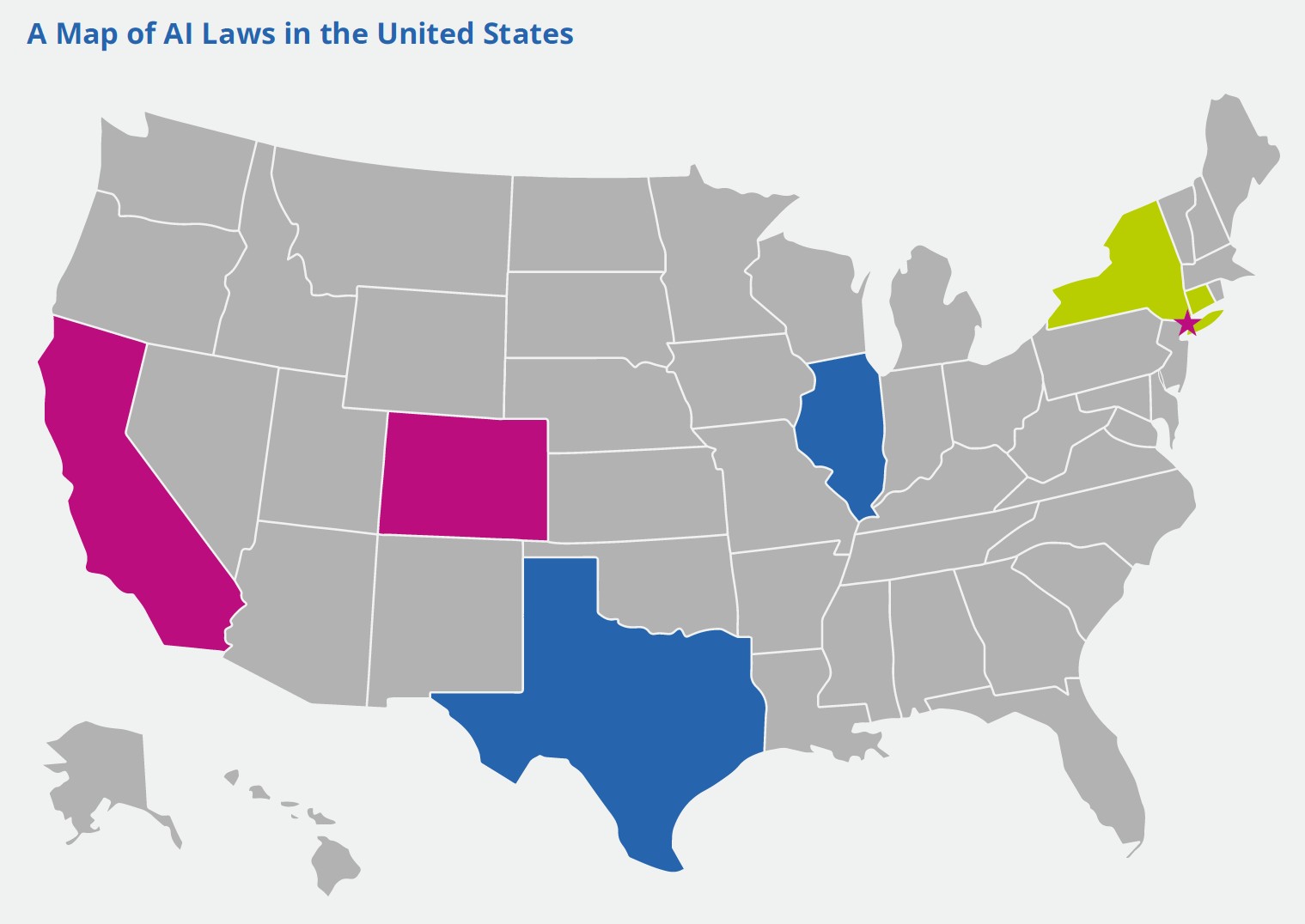

The map above shows state and local governments with existing or upcoming laws related to AI-use in employment decisions. At present, these laws can be sorted into three different categories:

- Pink: Denotes governments that require or compel an AI bias audit where AI tools are used for employment decisions. Currently, this includes California, Colorado, and New York City.

- Blue: AI bias audits have become advisable in these jurisdictions due to strengthened EEO laws, requirements that increase exposure, or provisions that reduce risk when these audits are conducted.

- Green: These governments have proposed AI-based employment legislation that has momentum behind it. (Note that while Connecticut’s law died in 2025, a similar law is expected to be proposed in 2026.)

NEWS FROM AFFIRMITY | ‘Affirmity Launches Talent Decisions Software Module for Data-Driven and Defensible Processes’

AI Law in California

In June 2025, California’s Civil Rights Council made AI-related changes to its Regulations, which came into effect on Oct 1, 2025. The changes augment the state’s Fair Employment and Housing Act (FEHA) Regulations.

Anti‑bias testing for AI or similar proactive efforts will be evaluated in claims. This is being interpreted to mean that organizations that have not conducted such an audit will have a harder time prevailing in any litigation challenging AI use in employment decisions brought against them in California.

This law also reinforces disparate impact theory and adopts and incorporates by reference the UGESP into the FEHA regulations. It additionally specifies that employers must retain AI decision-related data for four years.

California’s laws use the term “automated decision system,” stating that “an automated decision system may be derived from and or use artificial intelligence, machine learning, algorithms, statistics, or other data processing techniques.” This means the law is not necessarily limited to what we normally think of as AI systems or large language models.

California has additionally made amendments to its Consumer Privacy Act, dictating that employers using automated decision systems must:

- Provide notice that AI tools are used

- Offer opt‑out unless human review is available

- Conduct risk assessments for automated hiring tools

AI Law in Colorado

Colorado’s law is SB24-205, Consumer Protections for Artificial Intelligence, and it comes into effect in June 2026. Colorado’s approach is a little different from those of the other states and draws heavily on the European Union’s Artificial Intelligence Act, which focuses on different levels of risk.

SB24-205 is particularly focused on “high-risk” systems that make consequential decisions, and it imposes a duty of reasonable care on developers (the people who create the AI systems) and deployers (the employers that utilize such systems). This law has a large number of requirements, but some highlights include:

- Deployers with 50 or more employees are required to complete an impact assessment for high-risk systems annually

- Additional assessments must also be run within 90 days of any substantial modification to the system

- The risk assessment is fairly involved and may require a lot more than just doing the statistics that go into an impact analysis

- Deployers must maintain records for three years

- Deployers must notify users that the AI is being used

Colorado’s law refers specifically to “artificial intelligence systems”, which it defines as “any machine-based system that, for any explicit or implicit objective, infers from the inputs the system receives how to generate outputs, including content, decisions, predictions, or recommendations that can influence physical or virtual environments.”

AI Law in New York City

New York City Local Law 144 is a 2021 law that slightly pre-dates the mainstream LLM era of AI. It chiefly concerns a requirement that employers conduct an annual bias audit if they’re using “automated employment decision tools (AEDTs)” for employment decisions. Employers are required to:

- Cease using any AEDT that hasn’t had a bias audit within the last year

- Publicly post the results of their bias audits

- Notify employees and candidates that an AEDT is in use

The law defined an AEDT as “any computational process, derived from machine learning, statistical modeling, data analytics, or artificial intelligence, that issues simplified output, including a score, classification, or recommendation, that is used to substantially assist or replace discretionary decision making for making employment decisions that impact natural persons.” It also names several tools outside the definition (including junk email filters, spreadsheets, and firewalls).

A key phrase in the definition, “substantially assist,” attracted a number of questions, and was later clarified to mean:

- “to rely solely on a simplified output (score, tag, classification, ranking, etc.), with no other factors considered; or

- to use a simplified output as one of a set of criteria where the simplified output is weighted more than any other criterion in the set; or

- to use a simplified output to overrule conclusions derived from other factors, including human decision-making.”

AI Law in Illinois

The Illinois Human Rights Act was recently updated to prohibit the use of AI that has the effect of subjecting employees to discrimination—terminology that perhaps suggests the state is avoiding the term “disparate impact” despite functionally meaning the same thing. While an AI bias audit is not explicitly called for by Illinois law, it seems likely that organizations will have considerable difficulty demonstrating that they have not “subjected employees to discrimination” without one.

The update to the law also requires that employees and candidates be notified that AI is being used for employment decisions.

Illinois defines artificial intelligence in this context as “a machine-based system that, for explicit or implicit objectives, infers, from the input it receives, how to generate outputs such as predictions, content, recommendations, or decisions that can influence physical or virtual environments.”

AI Law in Texas

The Texas Responsible Artificial Intelligence Governance Act prohibits the development or deployment of AI with the intent to unlawfully discriminate. Notably, this law again avoids using “disparate impact” in its terminology, except to note that disparate impact is not, by itself, sufficient to demonstrate intent to discriminate.

Ultimately, it’s still implied that the presence of adverse impact will support a case seeking to prove intent to discriminate. Furthermore, the law provides an “affirmative defense” for conducting bias assessments using recognized frameworks (including the NIST framework). An affirmative defense is one in which a defendant can’t be found liable if they discover a violation through feedback, red teaming, or testing, provided they substantially comply with the management framework they’re using.

As in Illinois, while a “bias audit” is not mandated by the state, employers are strongly incentivized to conduct audits aligned with NIST standards to establish this legal defense.

Finally, it may be remarked that the Texas law has perhaps the most bare-bones definition for AI, i.e., “a machine-based system that infers from inputs it receives how to generate outputs.”

KEEP TRACK OF KEY DATES | ‘Affirmity 2026 Compliance Calendar’

Upcoming and Proposed Laws

The following states have also been making moves towards their own AI laws:

- New York State has periodically introduced bills aimed at mirroring New York City’s law. New Jersey attempted to do the same, but the bill died in committee.

- Connecticut proposed a law closely akin to Colorado’s law. Though this died in committee in 2025, it’s expected to be renewed in 2026.

Federal-level Laws

The following federal laws naturally remain relevant to the deployment of employee selection tools of any type.

- Title VII of the Civil Rights Act of 1964

- Americans with Disabilities Act (ADA)

- Age Discrimination in Employment Act of 1967 (ADEA)

Additionally, the current administration issued an Executive Order, “Ensuring a National Policy Framework for Artificial Intelligence,” in December 2025, which was followed by a legislative framework in March 2026. This activity is aimed at preventing a patchwork of state regulations while also preferring the creation of less stringent regulatory hurdles for AI use. This includes the creation of a taskforce to sue states with AI laws that do not align with the administration’s vision for AI enforcement. This is expected to have a somewhat cooling effect on states that pass these laws, but it doesn’t appear to have deterred a number of recent efforts.

MORE ON FEDERAL POLICY | ‘The False Claims Act: Details Emerge From DOJ at Federal Bar Association Conference’

Lawsuits Brought Against AI Hiring Systems

While legislation is being shaped at the state level and challenged by Federal oversight, the courts are doing their bit to shape the legal framework for AI use in organizations’ decision-making.

ACLU of Colorado v. HireVue and Intuit

This March 2025 lawsuit saw the American Civil Liberties Union (ACLU) of Colorado file a complaint on behalf of a deaf and Indigenous woman against Intuit (her employer) and HireVue Inc. (a software developer). The lawsuit alleges that the AI-backed interview platform used by Intuit in their process performs worse when evaluating non-White and deaf or hard-of hearing speakers.

The employee, who sought a promotion, allegedly requested human-generated captioning as an accommodation but was denied. The employee was subsequently rejected for the promotion due to her communication style. As a consequence, the ACLU claims HireVue and Intuit violated the ADA, Title VII, and the Colorado Anti-Discrimination Act.

What makes this case interesting is that, in the past, a request for accommodation involving a human generating captions was considered reasonable and would not have been questioned. With the rise of AI captioning systems, it appears that expectations and perceptions of what constitutes a hurdle may have changed. This case is still being litigated, and the outcome is not yet known.

Mobley v. Workday

This is a collective action lawsuit that involves several protected groups: age (40+), race, and disability discrimination. Mobley applied to over 100 jobs with companies that use Workday’s AI tools and says he was rejected in every case.

The resulting national collective action is currently in the opt-in phase, and the case rests on disparate impact theory. The results of the case could determine a lot about what is legally acceptable or unacceptable in terms of AI usage.

Harper v. Sirius XM Radio, LLC

A class-action lawsuit, Harper claimed that he was rejected from around 150 jobs, some within minutes of applying, despite being qualified. He’s claiming that AI used education, institution, zip code, employment history, and other data points that have clear relations to race in a way that perpetuates bias.

This case again rests on disparate impact as well as disparate treatment.

Kistler et al. v. Eightfold AI Inc.

This class action lawsuit is another interesting one, as it has broad implications, and the laws being triggered here are different from what you may expect.

The plaintiffs’ complaint is that AI systems are profiling them using information gathered from third-party sources, such as social media profiles, LinkedIn, GitHub, location data, internet and device activity, cookies, and other forms of tracking. The profiles generated via all this assembled data include personal characteristics such as preferences, predispositions, behavioral tendencies, attitudes, intelligence, ability, and aptitude. These profiles are then sold to employers without candidate knowledge or a method for recourse or correction.

While this is an issue according to several of the upcoming laws mentioned above, this also potentially violates California’s Investigative Consumer Reporting Agency Act (ICRAA) and Federal Fair Credit Reporting Act (FCRA) laws.

REVIEW THE CURRENT WORKFORCE COMPLIANCE ENVIRONMENT | ‘The 2026 Regulatory Landscape: What to Expect and How to Prepare’

Keep Learning About AI Use in Employment Decisions

This article is an extract from our ebook on this turbulent and important topic, “AI Use in Employment Decisions and the Emergence of AI Bias Audits.” In the full, 20-page ebook, we additionally cover:

- Examples of common uses for AI tools

- The benefits and drawbacks of using AI tools and traditional tools

- How organizations can approach an AI bias audit in order to stay compliant

Download the full ebook and learn how to use AI in your processes while remaining compliant. And for AI-related risk assessment and analysis assistance, contact us today.

About the Author

Patrick McNiel, PhD is a Principal Business Consultant for Affirmity. Dr. McNiel specializes in workforce analytics using both qualitative and quantitative methods to analyze employment practices and inform employment decisions. Dr. McNiel holds a PhD in Industrial and Organizational Psychology, and is licensed to practice psychology by the State of Texas. Connect with him on LinkedIn.